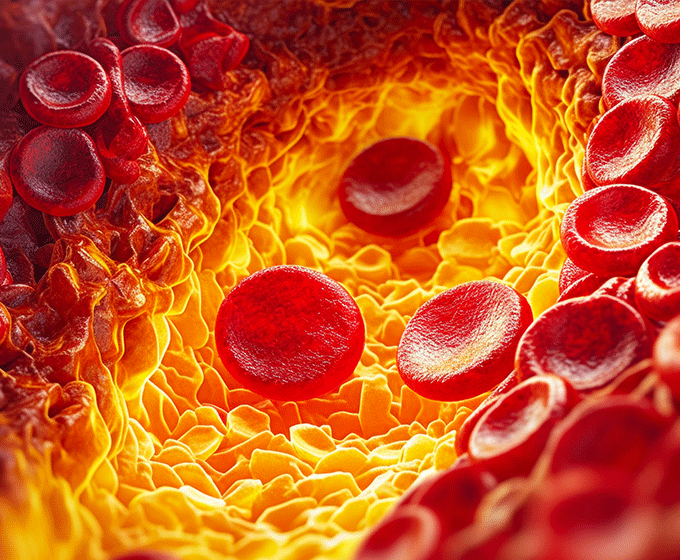

A image of cholesterol plaques inside an artery.

JANUARY 24, 2024 — Paul Rad, the associate director of research in the UTSA School of Data Science, and Marc Feldman, M.D., emeritus and adjoint professor of medicine in the Janey and Dolph Briscoe Division of Cardiology at UT Health San Antonio, are teaming up to develop a cutting-edge medical technology driven by artificial intelligence that identifies the exact composition of coronary heart disease.

Their innovation, a generative AI model based on existing optical arterial scans, would enable clinicians to view inside a person’s coronary artery in the heart catheterization laboratory to assess plaque build-up and future risk of heart attacks in real time.

Most people are familiar with medical imaging tools such as x-rays, ultrasounds and MRIs, but cardiologists need technology that is much more precise to see inside coronary arteries, which supply blood to the heart. This viewpoint is essential to predict future heart attacks, which are often caused by the buildup of fatty plaques that reduce arterial flow. These plaques can also rupture and trigger blood clots that can block the artery entirely, halt blood flow to the heart and cause tissue damage.

“We know at death, by autopsy, the characteristics of plaque that lead to heart attacks. They are usually large amounts of lipid covered by very thin caps of fibrous tissue,” said Feldman.

While an autopsy allows for definitive analysis of an artery via histology, or microscopic analysis, clinicans cannot microscopically analyze an artery while a person is alive.

Instead, clinicians use optical coherence tomography (OCT), a procedure that is most used for retinal scans.

Several years ago, Feldman and former UT Austin engineering professor Thomas E. Milner collaborated and adapted the OCT technology to peek inside the coronary arteries of living patients.

Coronary OCT works by capturing the reflection of an infrared light emitted by a catheter inserted into the artery in question. This allows doctors to “see” inside an artery and provides images with much higher resolution than other imaging techniques. However, this level of detail also makes the images inherently more difficult to analyze.

“The problem is that this optical technique gives so much information, it’s almost too much,” Feldman explained, “so the physicians that are using it are overwhelmed by the amount of information and detail.”

Paul Rad

Paul RadThis is where artificial intelligence – and UTSA’s Rad – come into the picture. Rad and UTSA doctoral research assistant Paul Young are developing an algorithm that can interpret coronary OCT images faster and more reliably than humans.

“The goal is to build a generative AI model that can learn from the existing images Dr. Feldman has captured in his lab, so our model can predict heart attacks at the earliest stage and doctors can make impactful decisions to avoid potential heart damage in the future,” said Rad, who also serves as a core faculty member in the UTSA School of Data Science.

The images Rad will use are crucial to the project. Feldman and his team, including UT Health San Antonio senior research scientists Aleksandra Gruslova and Drew Nolen and research assistant Luis Diaz Sanmartin have spent the last five years collecting approximately 2,000 OCT scans and histology images. Feldman believes it is the largest dataset of its kind in the world. They hope that by matching an OCT image to its corresponding histology, their AI model will begin learning to interpret other OCT images.

Rad says it’s not as easy as it sounds.

“The OCT sees a reflection of light in the material of the artery then Paul [Young] has to make sure the AI’s learning is adjusted based on the reflection of different materials because lipid is different from other materials in arteries,” Rad explained. “Basically, his model has to learn the physics of light.”

If the team’s model can learn the physics, the clinical implications could be groundbreaking. An AI model that can accurately identify what cardiologists see in real time will allow them to make on-the-spot treatment decisions such as whether to place a biodegradable stent in a patient and which location is the safest for a patient that needs one. Just as important, the AI would enable a uniformity and consistency of analysis that humans struggle to match.

Equally as important, if the model became sufficiently advanced, it could likewise be used to help humans better understand and interpret OCT imaging. From there, Feldman and Rad believe the possibilities for this technology are significant.

“Once you provide new information to a bunch of smart doctors, they’ll start creating new ways to apply or use it that we can’t foresee right now,” Feldman said.

Rad added, “This has the potential to change health care as we know it.”

Feldman’s lab is funded by The Clayton Foundation for Research, a Houston-based nonprofit medical research organization, and the National Institutes of Health. He and Rad are partnering with an OCT manufacturer and supplier of medical equipment to make their technology widely accessible when the project is completed, which Feldman anticipates will take several years.

UTSA Today is produced by University Communications and Marketing, the official news source of The University of Texas at San Antonio. Send your feedback to news@utsa.edu. Keep up-to-date on UTSA news by visiting UTSA Today. Connect with UTSA online at Facebook, Twitter, Youtube and Instagram.

Move In To COLFA is strongly recommended for new students in COLFA. It gives you the chance to learn about the Student Success Center, campus resources and meet new friends!

Academic Classroom: Lecture Hall (MH 2.01.10,) McKinney Humanities BldgWe invite you to join us for Birds Up! Downtown, an exciting welcome back event designed to connect students with the different departments at the Downtown Campus. Students will have the opportunity to learn about some of the departments on campus, gain access to different resources, and collect some giveaways!

Bill Miller PlazaJoin us for an intimate evening of cocktails, conversation, and culinary inspiration with Pati Jinich, Emmy-nominated chef and James Beard Award-winning author. Enjoy light bites and signature drinks in the warm, modern setting of Mezquite as Pati connects with guests over her passion for Mexican cuisine and storytelling.

Mezquite Restaurant in Pullman Market, 221 Newell Ave., San Antonio 78215From inspired courses to thoughtful pairings and a rich sense of community, the Ven a Comer Signature Dinner is a night of shared meals, shared stories, and unforgettable flavor.

Stable Hall (Pear Brewery), 307 Pearl Pkwy, San Antonio 78215Come and celebrate this year's homecoming at the Downtown Campus with food, games, giveaways, music, and more. We look forward to seeing your Roadrunner Spirit!

Bill Miller PlazaThe University of Texas at San Antonio is dedicated to the advancement of knowledge through research and discovery, teaching and learning, community engagement and public service. As an institution of access and excellence, UTSA embraces multicultural traditions and serves as a center for intellectual and creative resources as well as a catalyst for socioeconomic development and the commercialization of intellectual property - for Texas, the nation and the world.

To be a premier public research university, providing access to educational excellence and preparing citizen leaders for the global environment.

We encourage an environment of dialogue and discovery, where integrity, excellence, respect, collaboration and innovation are fostered.