Technologies

Remote Sensing, GIS, Geophysical Facility

Equipment

Item |

Quantity |

|---|---|

computers and workstations |

38 |

data and image server (20TB) |

1 |

electromagnetic induction meters (GSSI, Geonics) |

3 |

FM-CW snow radar system (customized) |

1 |

ground penetrating radar system (GSSI) |

1 |

plotter |

1 |

printer |

2 |

rain gauge (tipping bucket) |

8 |

scanner |

1 |

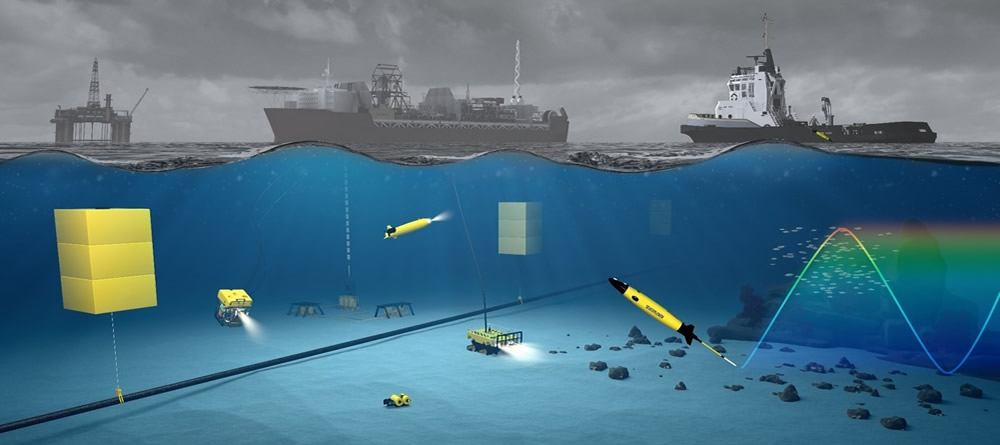

SEAEXPLORER glider (ALSEAMAR) |

1 |

spectroradiometer (ASD FR) |

1 |

terrestrial LiDAR scanner (Riegl VS-1000) |

1 |

thermal imaging camera (FLIR) |

1 |

Underway ice camera system (EISCam) |

1 |

Software Resources

Software |

Site Licenses |

|---|---|

Ecognition |

3 |

ENVI/IDL |

25 |

Erdas Imagine |

5 |

ESRI ArcGIS |

UTSA site |

FLAASH |

3 |

MATLAB |

UTSA site |

SEAEXPLORER Underwater Glider — watch video

Turbulence, Sensing, and Intelligent Systems

Himalaya Supercomputing Cluster

A dedicated cluster at UTSA consisting of 48-node quad-core computing cluster mainly used for code development, validation and optimization tasks.

UTSA Simulator NVIDIA Graphical Cluster

High performance simulations are conducted based on clusters based on CUDA architecture and with at least teraflop of peak performance. The system consists of 8*16GB DRx4 ECC, 4 NVIDIA Tesla K-80 units, 2x2TB SATA hard drives, 1x120GB SATA 2.5" solid state drives, and 2x12-16 core Intel Xeon 2.6gHZ with 3 year replacement warranty.

Mobile Sensing

The laboratory is equipped with wind, temperature, and surface flux and gas sensors to be mounted on mobile tripods. Additional mobile sensing is obtained by mounting sensors on unmanned aerial vehicles. A cooperative fleet of 6 automated unmanned aerial vehicles (UAV) with the following specification are used:

- Quad-Rotor: +4, x4

- Hex-Rotor: +6, x6, Y6, Rev Y6

- Octo-Rotor: +8, x8, V8

- supported ESC output: 400Hz refresh frequency

- supported transmitter for built-in receiver: Futaba FASST series and DJI DESST series

- supported external receiver: Futaba S-Bus, S-Bus2, DSM2

- recommended battery: 2S~6S LiPo

- operating temperature: -5°C to +60°C

- assistant software system requirement: Windows XP SP3 / 7 / 8 (32 or 64 bit)

- other DJI products supported: Z15, H3-2D, iOSD, 2.4G Data Link, S800 EVO

In-House Models

- Weather-Research-Forecast (WRF) – Plume: The existing WRF model has been modified and a new plume module has been implemented. The plume modules solves an additional turbulent transport equation using nested grids within a Large-Eddy-Simulation (LES) framework. The version is now available for testing.

- Data Downloads: A 12-km and 4-km resolution simulation Atmospheric Boundary Layer (ABL) data in the regions of (a) Texas, (b) Iowa, (c) Idaho, (d) Gulf of Mexico Region, (e) Florida, (f) California coasts, and (g) Atlantic coast is available for download.

- Density Currents on Sloping Region: An in-house LES model has been developed to model density currents over sloping rough-bottom and is available for download for research purposes.

- Direct Numerical Simulation (DNS) of Flow over Roughness: An immersed boundary method based DNS solver has been developed. The high Re data for uniform and irregular roughness is available for download.

Aerodynamics Facilities

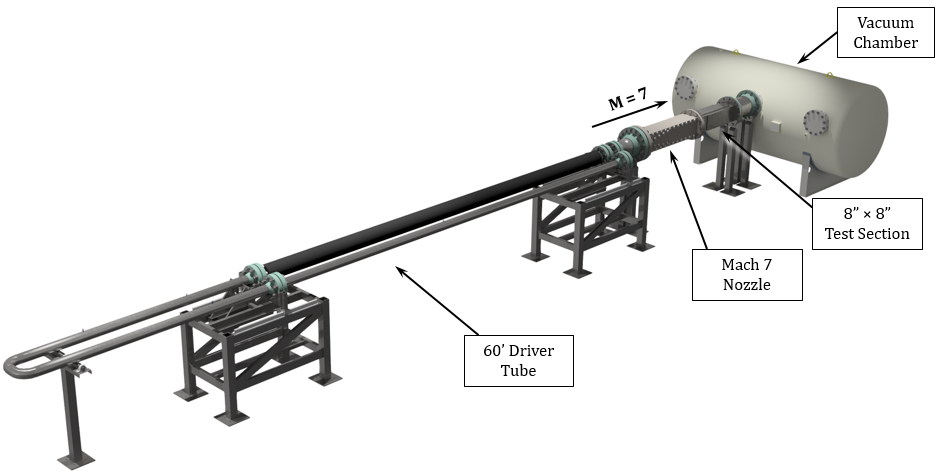

Mach 7 Wind Tunnel

- Ludwieg Tube Facility

- M = 7

- Re = 0.5 – 50 x 106 m-1

- Test Section = 8" x 8" (square)

- To = 700 K

- Test Time = 70 ms (x 4 per burst)

Low-Speed Wind Tunnel

- Open Loop Blowdown Facility

- Freestream Velocity = 40 m/s

- Test Section = 1.5' x 1.5' (square)

- Free Stream Turbulence < 0.3%

Measurement Equipment

- High-Speed Photron SA-Z Camera (20kHz-1MHz)

- LaVision IRO Image Intensifier

- Spectral Energies Burst-Mode Laser (1kHz-1MHz)

- Litron 15Hz PIV Laser

- LaVision 15Hz Stereo PIV System

- Sirah Dye Laser

- Excimer Laser

- FLIR IR Cameras

- Kulites, Thermocouples, and assorted Pressure Transducers

- Capability for integration of force/moment balances

Other Computational Resources

University of Texas at San Antonio (UTSA)

The Office of Information Technology at UTSA supports an on-campus High Performance Computing research cluster, Shamu. This system is available free of charge to UTSA faculty and student researchers. Shamu components include:

- 113 physical servers

- 3580 total CPU cores

- 23TB of shared memory

- Dell Compellent highly fault tolerant storage array with 150TB of shared disk storage (expandable up to 1.05PB depending on disk configuration)

- 2 GPU nodes, each containing 4 NVidia Tesla K80 GPU cards

- 1 node with 72 Xeon cores and 1.5TB RAM

- The nodes are connected via 9 Mellanox 40Gb/s Infiniband switches and 5 Dell Powerconnect Ethernet switches

- Integration with various software packages, including ANSYS FLUENT and OpenFoam

Texas Advanced Computing Center (TACC)

The verified modeling job can also be submitted to the TACC Stampede without extra cost. It has 6400 nodes / 102,400 cores, 505TB memory, and 14PB shared disk space. Stampede system components are connected via a fat-tree, FDR InfiniBand interconnect for maximum efficiency and scalability. With its 2+ Pflops peak performance and 7+ Pflops with coprocessors, it ranks 7th in the TOP500 list released on November 12, 2012.

The TACC received a $60 million NSF grant to build the nation's fastest academic supercomputer, known as "Frontera," to begin operations in 2019. The project will access and use this facility without any extra cost.